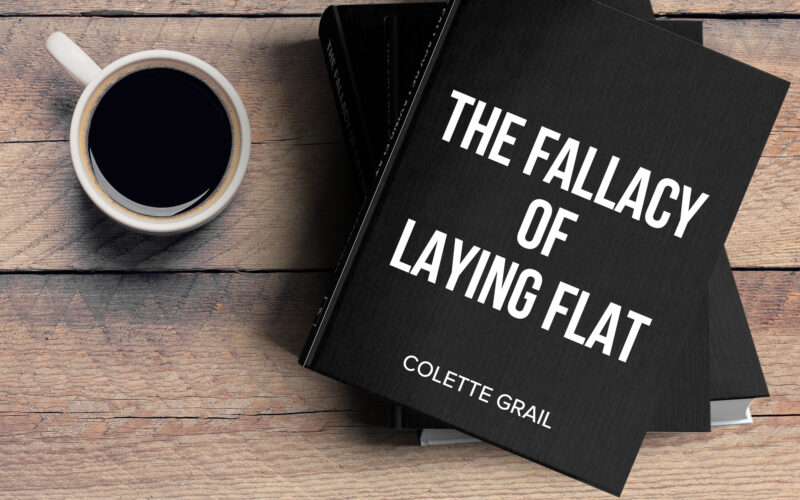

I have been “greenlit” by #newdegreepress. They’ve reviewed my work so far on my book “The Fallacy of Laying Flat”. I’ve got the words, the format […] Read More

Big Data Solving Big Problems

I have been “greenlit” by #newdegreepress. They’ve reviewed my work so far on my book “The Fallacy of Laying Flat”. I’ve got the words, the format […] Read More

Will YOU die from COVID-19? That’s really the burning question. With all the floating conspiracy theories commingled with distressing talleys, there’s a definite fuzziness to […] Read More

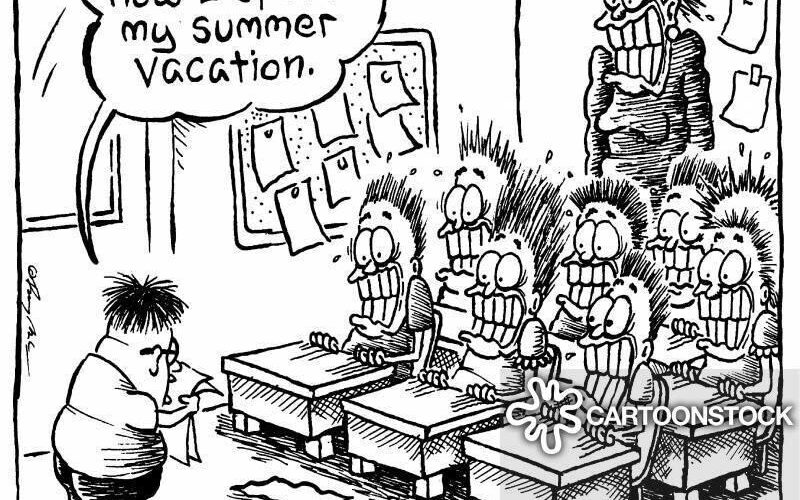

Chapter X of The Fallacy of Laying Flat Before we delve into the art and science of how Big Data derives solutions, let’s look at […] Read More

I watched a marketing webinar last night. It was free. Typical of that genre, the point was to give away some information but sell […] Read More

![By Piergiuliano Chesi (Own work from scan) [Public domain], via Wikimedia Commons](https://whatsthebigdataidea.com/wp-content/uploads/2018/06/1024px-Trans_World_Airlines_ticket_1973-08-03-800x417.jpg)

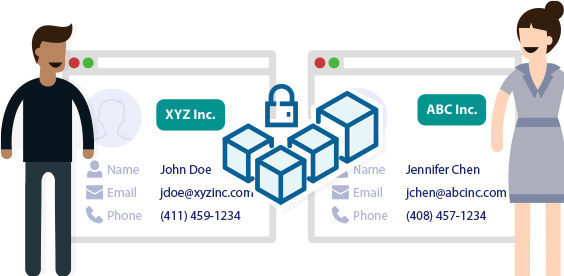

Part 3 Expanding Operational: Blockchain Deployments for Impact Expanding Operational: Blockchain Deployments for Impact In Part 1, we explored the building blocks of blockchain […] Read More

Part 2 Moving from Tactical to Operational: Contained Blockchain Deployments In Part 1, we explored the building blocks of blockchain – bitcoin and smart […] Read More

Big Data Takes on Big (Boob) Problems Big Data application comes in many shapes and sizes and the Big Data Bra is Big Data wrapped […] Read More

My pre-launch book sale campaign is underway!! Here’s a taste of what’s up for sale! What’s Your Problem Solving world hunger or fending off COVID-19 […] Read More

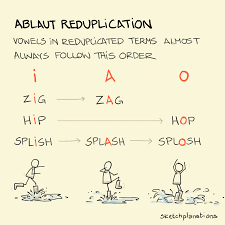

I have biases; you have biases. Why is it ding-dong and not dong-ding? PIng-pong and not Pong-ping? Sing-song. Tick tock. Hip hop. We can’t help […] Read More

My friend @michaelsagnermd wasn’t very complimentary but he was right. I think it fits better as “Any idiot can start a book.” Everyone has a […] Read More

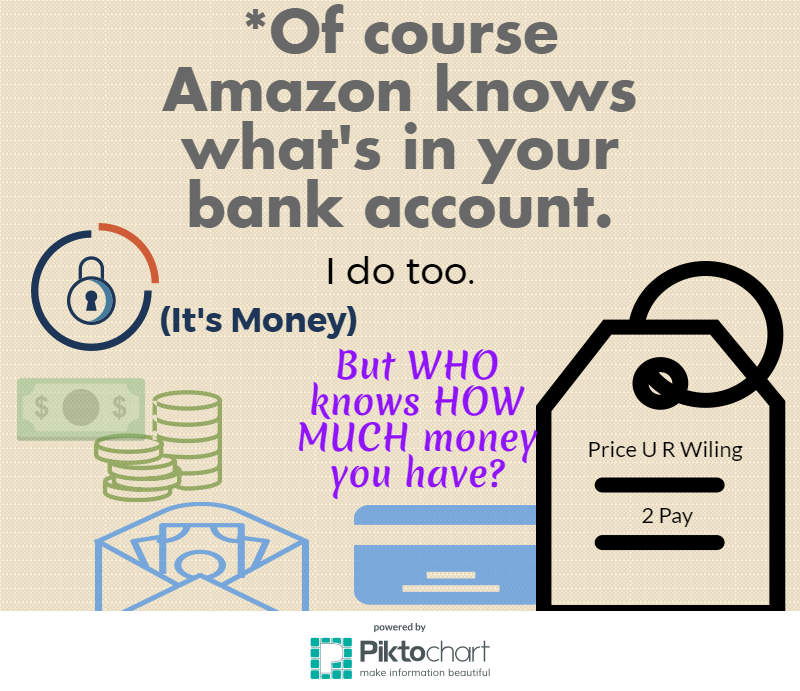

#amazonknowswhatsinyourwallet #privacy #personalization Wouldn’t it be nice if what you were just thinking of something you needed to get . . . and it came […] Read More

Almost 15 years ago, finding out what your friends were up to meant going to individual FB pages to check on them. Click. Read. Click. […] Read More

Did COVID-19 start in a lab or naturally progress from animals to humans? While there’s no shortage of speculation on #COVID-19 – especially how it […] Read More

#coronavirus #techhacks Touching is tangency. We didn’t realize how much we touched stuff until health and life demanded you pay attention. Realizing how much you […] Read More