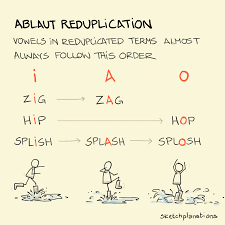

I have biases; you have biases. Why is it ding-dong and not dong-ding? PIng-pong and not Pong-ping? Sing-song. Tick tock. Hip hop. We can’t help ourselves; it’s what we’ve always done – but are you self aware to this #artificialintelligence training? It’s even got a dirty-sounding name: ablaut reduplication. (Gesundheit!) It goes like this: I goes before A goes before O when the pattern by which vowels change in a repeated word to form a new word or phrase with a specific meaning.

We’re also trained to order adjectives accordingly: Quantity or number · Quality or opinion · Size · Age · Shape · Color · Proper adjective. That’s why it’s a cute little old dog and not an old cute little dog.

So #google didn’t create these rules -> algorithms. Some country gentlemen in old merry England decided there needed to be an order, and this is where we are today. Some might recall the adjective order from a grammar lesson long ago but most never even realize the I>A>O ordering. We accept it; repeat it; live it without being cognizant of the rule, let alone feel the need to dispute it.

Poignantly – Google wasn’t taught those rules about I>A>O or adjective order. Google isn’t taught grammar. Google consumes mass quantities of #English and mimics what it finds therein. This is a soft very example of how bias is built in when training #AI. Google didn’t invent the bias, but it does “learn” it.

Find out more about how #BigData, bias, and #decisionmaking intertwine in my upcoming book #thefallacyoflayingflat set to publish in #fall2022.

Have a great weekend!

#friday #tgif #bigdata #ablautreduplication #machinelearnning #ML